The Inference Economy: LLM Inference Is Everywhere, Not Just in Your Chatbot

When most people think of Large Language Models (LLMs), they think of chatbots. However, this perspective drastically understates the true scope and technical necessity of the inference market. Inference is much more than typing a prompt and receiving a response. In reality, it’s become a basic compute primitive that powers the entire AI lifecycle.

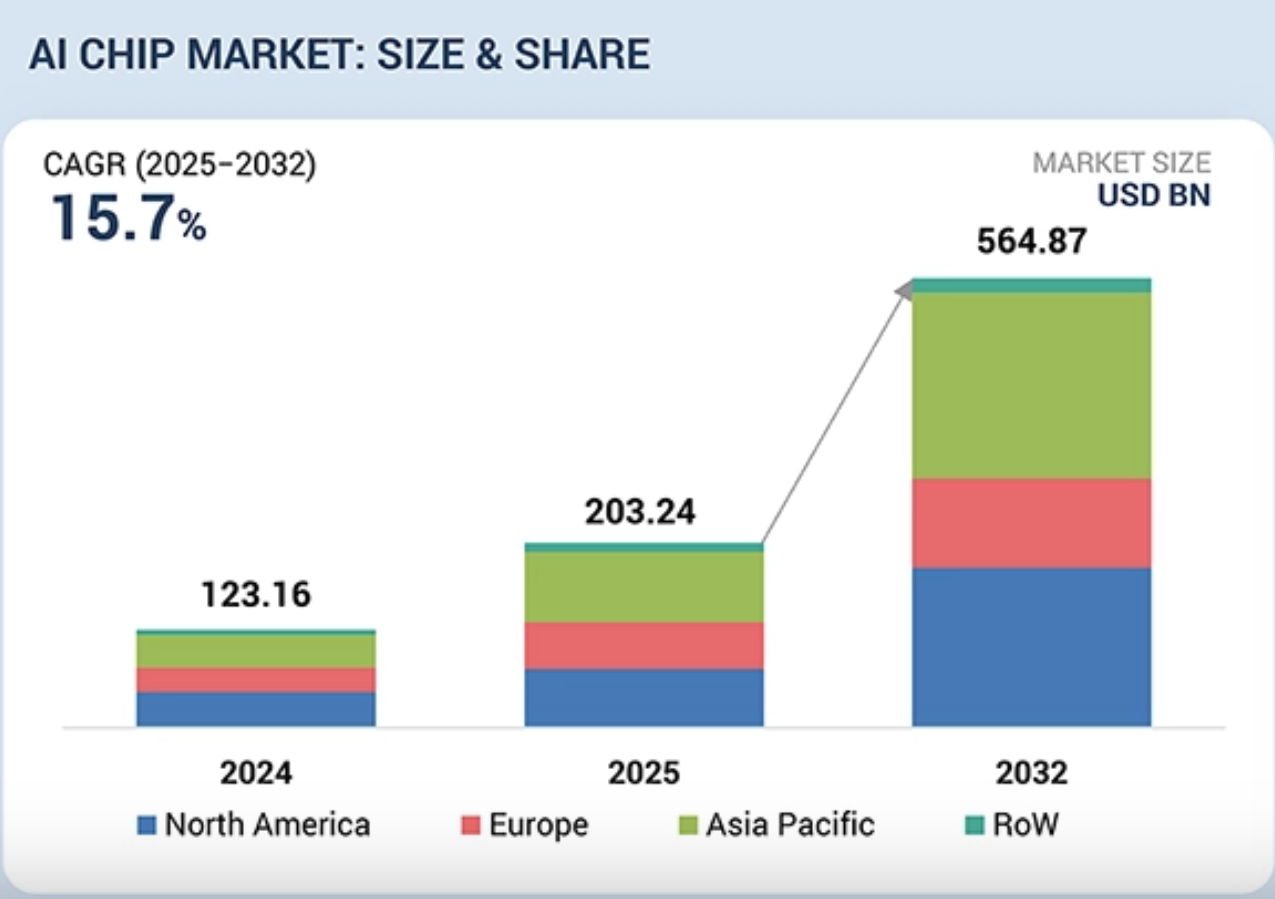

As we move toward 2028, the data center landscape is changing drastically. The AI accelerator Total Addressable Market (TAM) is projected to explode from a $45B baseline in 2023 to over $500B. Within this growth, inference is the biggest driver, expanding at a CAGR of over 80%. To put this in perspective, NVIDIA’s Chief Financial Officer, Colette Kress, reported that as early as 2024, approximately 40% of data center revenue was already attributed to AI inference.

At ElastixAI, we recognize that the Inference Economy is the engine of modern intelligence. By significantly reducing the cost and energy per token, we are enabling a massive ecosystem of background processes that make modern AI possible.

The Hidden Tech Behind Inference

To understand why the inference market is significantly larger than the chatbot use case, one must look at the hidden workloads that consume the vast majority of modern compute cycles.

Synthetic Data Generation and Knowledge Distillation

The industry is quickly approaching a data wall where high-quality, human-generated data is becoming scarce. To overcome this, developers are beginning to use massive "teacher" models to generate synthetic datasets. This process involves running billions of inference tokens to create structured data that is then used to train smaller, more efficient "student" models in a process known as Knowledge Distillation. In this paradigm, inference is actually a critical input for the next generation of model training.

Reinforcement Learning from AI Feedback (RLAIF)

The move from raw pre-trained models to helpful assistants relies heavily on Reinforcement Learning. While Reinforcement Learning with Human Feedback (RLHF) was the early standard, the scale required today has pushed the industry toward RLAIF.

Reward Model Scoring: During training, a separate Reward Model performs inference on thousands of potential model outputs to score their quality.

AI Feedback loops: Instead of human rankers, judge models perform constant inference to provide feedback to the primary model, creating a continuous loop of compute-heavy refinement.

Verifiable Rewards: In high-stakes environments, inference is used to run verifiable reward scripts that check for logic, code execution, or mathematical accuracy.

Agentic and Tool-Use Loops

Many believe that the next frontier of AI involves agents that can take action for users. An agentic workflow is rarely a single inference call. Instead, it is a recursive loop:

Plan: The model performs inference to decide on a course of action.

Act: The model calls a tool (e.g., a database or search engine).

Observe: The model performs inference on the tool’s output.

Refine: The model iterates until the goal is met. One simple user request might trigger 10 to 50 internal inference calls. This agentic multiplier is a primary reason why the inference market is outstripping the training market.

The Economics of the Token: Why Efficiency is the Only Path Forward

As the market approaches that $500B+ valuation, the looming bottlenecks are the economic and energy costs per token. If an agentic loop requires 50 times more compute than a simple chatbot, the cost of that compute must drop by a corresponding margin to remain commercially viable.

By optimizing the inference primitive, we can affect the entire AI lifecycle:

In Training: Cheaper inference allows for more exhaustive RLAIF and synthetic data generation at a fraction of the current cost.

In Production: Lowering the cost per token makes complex agentic loops and multi-step reasoning profitable.

In Sustainability: By reducing energy per token, we enable massive scaling of AI infrastructure without an equivalent surge in environmental impact.

ElastixAI’s Approach

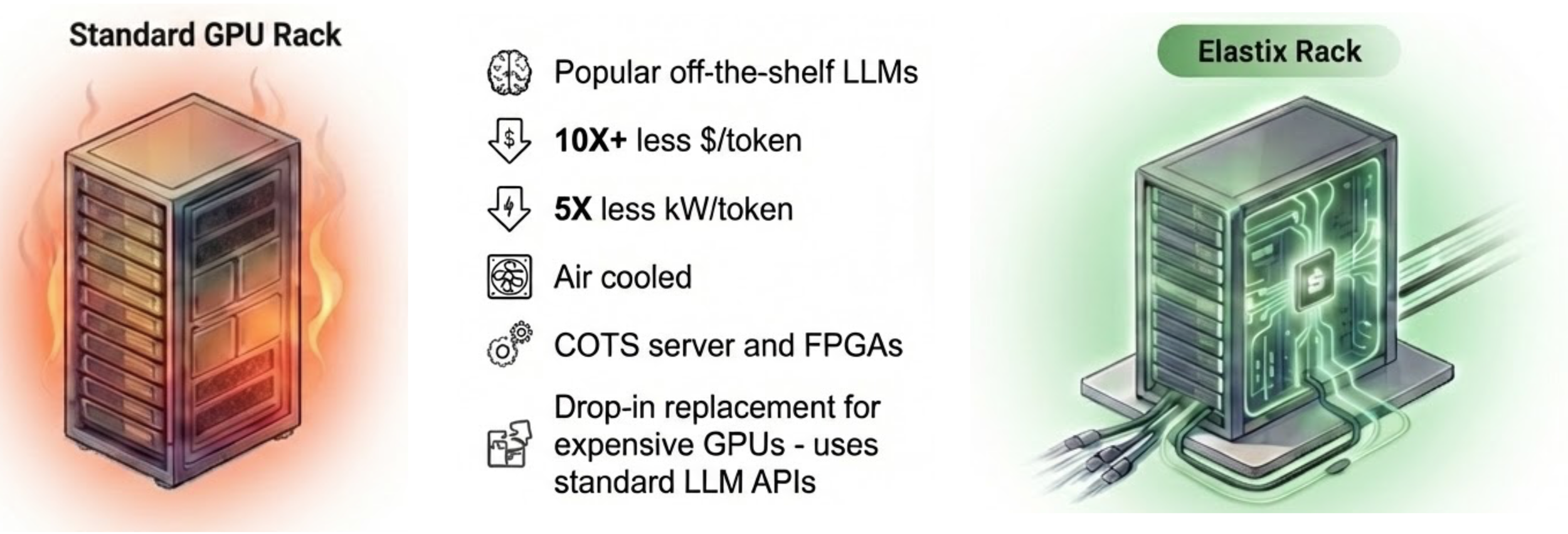

The standard approach to inference often relies on brute-force scaling. In contrast, ElastixAI’s approach prioritizes drastic reductions in energy consumption and latency by replacing general-purpose GPUs with dynamic, algorithm-specific processors.

The ElastixAI solution decreases cost and energy per token.

While traditional GPUs are designed years in advance and waste power on inactive chip areas, ElastixAI uses reconfigurable FPGA hardware to generate a custom processor design optimized for a given model's requirements in seconds. By dynamically co-designing the hardware, software, and LLM stack, the platform achieves a 5-50x TCO-per-token advantage and up to 80% lower power consumption than existing Nvidia or AMD workflows.

Furthermore, the system achieves a step-function reduction in latency and costs by providing native hardware support for advanced optimizations. Whereas fixed-logic GPUs require inefficient software workarounds for these techniques, ElastixAI’s co-designed data paths move only the necessary bits, reducing data transfer overhead and power consumption while realizing theoretical performance gains often left on the table by traditional infrastructure.

Our Vision

The inference market is the defining market of the next decade. Most observers look at a chatbot and see a product. We look at the infrastructure and see a primitive that is currently under-optimized and misunderstood.

Our mission at ElastixAI is to provide the methodology and tools necessary to power this Inference Economy. By attacking the cost and energy barriers, we enable the hidden applications that will eventually represent the vast majority of all AI-driven compute.

Inference is everywhere. It's time our infrastructure started acting like it.

Ready to optimize your inference strategy? To learn more about how full-stack co-design can reduce your energy footprint, email info@elastix.ai to explore the revolutionary technology we are building.